Workload-Aware Preemption arrives, DRA goes default-on, PSI metrics graduate to GA, and pod-level resources finally get a Beta. Here’s what actually changed in Kubernetes 1.36 resource management — with commands and test results from a kubeadm cluster on GCE.

Kubernetes 1.36 resource management is the release where six previously-opt-in features flip to default-on across the device, workload, runtime, and observability layers — closing the integer-GPU plumbing gap, fixing the partial-preemption failure mode for distributed training, and surfacing kernel-level pressure signals as first-class data on the kubelet Summary API.

TL;DR. Kubernetes 1.36 resource management is less about brand-new mechanics and more about the defaults catching up to two years of accumulated AI workload scar tissue. Five resource-management features that previously hid behind feature gates now ship enabled by default, one genuinely new alpha — Workload-Aware Preemption (KEP-5710) — finally treats AI training PodGroups as one preemption unit, and PSI metrics graduate to GA with kubelet-driven node conditions on top. Read the 1.34 release blog and the 1.35 deep dive for the primitives and semantics Kubernetes 1.36 resource management builds on; this post is the operational story of what 1.36 turns on without asking you.

| Layer | 1.34 (Aug 2025) | 1.35 (Dec 2025) | 1.36 (Apr 2026) |

| Devices | DRA Core GA | Feature gate locked | Partitionable Devices, Consumable Capacity, Device Taints/Tolerations, Resource Health Status — all Beta default-on |

| Workloads | — | Gang Scheduling Alpha (spec.workloadRef) | Gang Scheduling Beta + Workload-Aware Preemption Alpha (KEP-5710) |

| Runtime | In-Place Resize Beta | In-Place Resize GA (container scope) | Pod-Level Resources Beta default-on |

| Observability | PSI Metrics Beta | PSI refinement | PSI Metrics GA + node conditions |

| Honorable mention | — | — | DRA Device Attributes Downward API (KEP-5304) Alpha |

Every cell in the rightmost column lands the moment you switch the API server to 1.36. Most of them used to require an explicit --feature-gates flag.

Key Takeaways

- Kubernetes 1.36 resource management is a defaults-flip release. Five features that previously required

-feature-gatesflags now ship enabled by default: DRA Partitionable Devices, DRA Consumable Capacity, DRA Device Taints/Tolerations, Resource Health Status, and Pod-Level Resources. - Workload-Aware Preemption (KEP-5710) is the headline new Alpha. For the first time, the kube-scheduler treats a

PodGroupas one preemption unit and refuses to start a preemption it cannot finish — eliminating partial-eviction zombie pods in distributed AI training. - DRA setup on kubeadm 1.36 needs four flags the release notes don’t advertise:

-runtime-config=resource.k8s.io/v1alpha3=true, the correct feature-gate names (DRAResourceClaimDeviceStatus, notDRAResourceClaimStatus), the NGC Helm repo (not OCI path), and-set featureGates.MPSSupport=truefor NVIDIA MPS sharing. - Kubernetes PSI metrics are GA but PSI-driven node conditions are not. The cAdvisor and Summary API endpoints surface CPU/memory/IO pressure as structured data; the auto-tainting and PSI-driven node-pressure eviction layers above them still require an external control loop.

- Vendor implementations lag the K8s spec on several fronts. NVIDIA DRA driver chart 25.12.0 ships MPS sharing once

featureGates.MPSSupport=trueis set, but does not yet implementNVMLDeviceHealthCheck(soallocatedResourcesStatushealth staysUnknown) or KEP-5304 device-attribute mounting. Date-stamp your reproductions. - The 1.36 primitives compose with policy layers. Native gang scheduling and DRA give correctness; ScaleOps GPU Optimization, Smart Pod Placement, Node Optimization, Karpenter Optimization, Java Optimization, Automated Pod Rightsizing, and Replica Optimization run the policy and capacity strategy on top of the new K8s primitives without replacing any of them.

What Is Kubernetes 1.36 Resource Management?

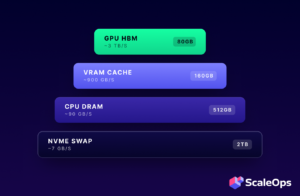

Kubernetes 1.36 resource management is the cluster-side surface for declaring, scheduling, enforcing, and observing CPU, memory, hugepages, ephemeral storage, and DRA-managed devices on Pods and containers. In 1.36 it spans five primitives that all moved at once: pod-level spec.resources (Beta), DRA partitionable devices and consumable capacity (Beta default-on), DRA device taints and resource health status (Beta default-on), Workload-Aware Preemption over PodGroups (Alpha), and PSI metrics (GA). The traditional building blocks — requests vs limits, QoS classes (Guaranteed, Burstable, BestEffort), Node Allocatable, ResourceQuota, and LimitRange for namespace-level resource governance — still apply unchanged; 1.36 adds new fields on top of them, and on top of the kubelet eviction manager that watches MemoryPressure, DiskPressure, and PIDPressure node conditions to trigger node-pressure eviction. The kubelet’s Topology Manager, CPU Manager, and Memory Manager continue to coordinate NUMA-aware placement and cgroup-level pinning beneath all of this. Failure modes (OOMKilled when a container exceeds its memory limit, CPU throttling under CFS quota) are unchanged. The autoscaling control loop on top — horizontal pod autoscaling (HPA), vertical pod autoscaling (VPA), and node autoscaling via Cluster Autoscaler or Karpenter — consumes the new surfaces but is also unchanged. Operationally, you scrape the new endpoints with Prometheus or metrics-server.

1. The Defaults Flip — What Actually Changed

Kubernetes resource management features have a familiar life cycle: alpha behind a gate for two or three releases, beta but still opt-in for one more cycle, and then default-on. That last step — when a feature stops being something you have to ask for — is when most clusters actually start using it.

Kubernetes 1.36 is that moment for an unusually large chunk of the resource-management surface. Six features hit “default-on Beta” or “GA” simultaneously, and they happen to be the six that mattered most for AI workloads. Most of the 1.36 release notes for resource management are not about new mechanics. They are about defaults.

We’ll walk the four-layer stack from device → workload → runtime → observability, classifying each feature as either brand-new alpha (KEP-5710 + KEP-5304), first-time default-on (Partitionable, Consumable, Taints, Health, Pod-Level Resources), or GA promotion that changes the operational story (PSI). Every demo runs on a real kubeadm 1.36.0 cluster on GCE — outputs are verbatim, gotchas are documented, and where vendor support hasn’t caught up to the K8s spec we say so plainly.

2. Devices — DRA goes on by default

Four DRA features that previously hid behind feature gates ship enabled in 1.36. Together they take the integer-GPU device-plugin model — nvidia.com/gpu: 1 and you own the whole card whether you use 5% of it or 95% — and replace it with primitives that express how modern accelerators are partitioned, shared, tainted, and recovered when they fail.

Lab. kubeadm 1.36.0, GCE g2-standard-4 (1× NVIDIA L4), Ubuntu 24.04, Calico, NVIDIA datacenter driver 580.142, NVIDIA DRA driver chart 25.12.0 via the NGC Helm repo.

2.1 DRA Bring-Up Gotchas on kubeadm 1.36

Eleven setup gotchas bit us across the full article. Numbered globally so cross-references stay clear:

| # | Gotcha | Where it’s explained |

| 1 | Ghost feature-gate name (DRAResourceClaimStatus doesn’t exist) | 2.1 below |

| 2 | Runtime-config required for DeviceTaintRule (v1alpha3) | 2.1 below |

| 3 | Helm OCI path is broken; use NGC Helm repo | 2.1 below |

| 4 | featureGates.MPSSupport=false by default | 2.1 below |

| 5 | Tolerations on the Pod, not the ResourceClaim | 2.1 below |

| 6 | YAML 1.1 boolean trap (y/n parse as booleans) | 2.1 below |

| 7 | Driver-side health gate (NVMLDeviceHealthCheck) | 2.3 |

| 8 | Ghost feature gates for Workload API (WorkloadSupport, PodGroupPriority) | 3.1 |

| 9 | Workload/PodGroup at v1alpha2, not v1alpha1 | 3.1 |

| 10 | Bogus PriorityClassName validated at admission time | 3.1 |

| 11 | GA gate warnings for InPlacePodVerticalScaling and KubeletPSI | 4 |

Each callout below uses the global Gotcha #N label. Six of the eleven cluster around DRA bring-up:

- Gotcha #1 — Ghost feature-gate name.

DRAResourceClaimStatusis in the release notes but does not exist on the v1.36.0 binary. Real gates:DRAResourceClaimDeviceStatus(KEP-4817) andDRAResourceClaimGranularStatusAuthorization. - Gotcha #2 — Runtime-config required.

DeviceTaintRuleis Beta but lives atresource.k8s.io/v1alpha3. Without-runtime-config=resource.k8s.io/v1alpha3=trueon kube-apiserver, the type does not register. Same shape as the Section 3 PodGroup runtime-config. - Gotcha #3 — Helm OCI path is broken. With Helm 3.20+,

oci://nvcr.io/nvidia/k8s-dra-driver-gpureturnsmanifest does not contain minimum number of descriptors. Use the NGC Helm repo and chart namenvidia/nvidia-dra-driver-gpu. - Gotcha #4 —

featureGates.MPSSupport=falseby default. Without it the kubelet plugin returns the misleadingunknown GPU sharing strategy: MPS. (See NVIDIA issue #762 for the same symptom on TimeSlicing.) - Gotcha #5 — Tolerations on the Pod, not the ResourceClaim. KEP-5055 README implies otherwise — strict-decode rejects

resourceclaim.spec.devices.tolerations. Usepod.spec.tolerations. - Gotcha #6 — YAML 1.1 boolean trap.

name: yandrequest: yparse as booleans. Quote them.

After bootstrap, the chart registers six DeviceClasses (gpu.nvidia.com, mig.nvidia.com, vfio.gpu.nvidia.com, plus three compute-domain classes) and a single ResourceSlice per node with rich device attributes — productName: NVIDIA L4, architecture: Ada Lovelace, cudaComputeCapability: 8.9.0, capacity.memory: 23034Mi, plus the full PCI bus ID and device UUID. The level of self-description is substantially richer than what the integer nvidia.com/gpu: 1 extended resource exposed under the device plugin model.

2.2 DeviceTaintRule (KEP-5055)

Same operational primitive as node taints, applied one level lower. When a GPU starts throwing ECC errors, taint it; the workloads that don’t tolerate it get evicted; a diagnostic pod can land on it on purpose.

apiVersion: resource.k8s.io/v1alpha3

kind: DeviceTaintRule

metadata: { name: gpu-fault }

spec:

deviceSelector:

driver: gpu.nvidia.com # matches ResourceSlice.spec.driver

# NOT deviceClassName

taint:

key: health.scaleops.io/test

value: fault

effect: NoScheduleA pod with a ResourceClaim against gpu.nvidia.com and no toleration stays Pending. The diagnostic-pod escape hatch is a Pod-level toleration (Gotcha #5):

spec:

tolerations:

- key: health.scaleops.io/test

operator: Equal

value: fault

effect: NoSchedule

resourceClaims:

- { name: gpu, resourceClaimName: diag-claim }Delete the DeviceTaintRule and normal claims schedule again.

2.3 Resource Health Status (KEP-4680)

When the GPU is unhealthy but the pod is still running, Pod.status historically said nothing. 1.36 surfaces per-device health on each container’s status:

kubectl get pod g -o jsonpath='{.status.containerStatuses[*].allocatedResourcesStatus}' | jq[{

"name": "claim:g/gpu",

"resources": [{

"health": "Unknown",

"resourceID": "k8s.gpu.nvidia.com/claim=396d6a00-...-gpu-0"

}]

}]The field populates and the JSON structure matches the KEP, but the health value stays at "Unknown" even when nvidia-persistenced is stopped on the host, and the value never transitions to "Unhealthy".

Gotcha #7 — driver-side health gate. ResourceHealthStatus=true on K8s surfaces the field. It does not enable the NVML health checks that populate it. The NVIDIA DRA driver kubelet plugin has its own internal NVMLDeviceHealthCheck gate that defaults false in chart 25.12.0. The K8s release notes don’t mention this dependency.

2.4 MPS sharing via GpuConfig

KEP-4815 Partitionable Devices needs MIG hardware to demo (A100/H100). On an L4 the closest equivalent is NVIDIA’s MPS sharing, expressed via GpuConfig in the ResourceClaim:

apiVersion: resource.k8s.io/v1

kind: ResourceClaim

spec:

devices:

requests:

- name: "gpu" # quoted (Gotcha #6)

exactly: { deviceClassName: gpu.nvidia.com }

config:

- opaque:

driver: gpu.nvidia.com

parameters:

apiVersion: resource.nvidia.com/v1beta1

kind: GpuConfig

sharing:

strategy: MPS

mpsConfig:

defaultActiveThreadPercentage: 50

defaultPinnedDeviceMemoryLimit: 10GiWith featureGates.MPSSupport=true (Gotcha #4) the claim allocates and the NVIDIA MPS Control Daemon spawns automatically. Two pods sharing one MPS GPU need one shared ResourceClaim referenced by both — two separate exclusive claims compete for the device and the second stays Pending. The two scheduler contracts are distinct: MPS shares a single allocation across multiple consumer pods, whereas partitionable devices expose the underlying hardware as several independently allocatable ResourceSlice entries.

2.5 What 1.36 ships vs what we could validate on L4

| K8s 1.36 feature | Validated on L4? | Note |

DeviceTaintRule (KEP-5055) | ✅ | After Gotcha #2 fix |

allocatedResourcesStatus (KEP-4680) | ✅ field populates | Health stays Unknown (Gotcha #7) |

MPS via GpuConfig (vendor) | ✅ single shared claim | Two-claim pattern unsupported on one GPU |

| Partitionable Devices (KEP-4815, framework) | ⚠️ no MIG hardware | Needs A100/H100 to prove end-to-end |

| Consumable Capacity (framework) | ⚠️ vendor pending | Chart 25.12.0 doesn’t advertise allowMultipleAllocations on gpu.nvidia.com |

Two of five rows are honest “different hardware” or “newer vendor” gaps — not failures on the K8s side. NVIDIA’s roadmap covers both.

2.6 Pick the right DRA model

| Model | Sharing | Hard isolation | When to use |

Device plugin (nvidia.com/gpu: N) | None | N/A | Legacy clusters, no shared GPUs |

| DRA whole-device (1.34+) | None | N/A | DRA-native pipelines, no fractional needs |

| DRA partitionable (1.36 Beta default) | 1:1 to partition | Hardware-dependent (MIG yes) | A100/H100/B200 |

| DRA consumable capacity (1.36 Beta default) | N:1 to device | Vendor-enforced | Inference fleets sharing a big device |

MPS via vendor GpuConfig | M consumers per claim | None | L4-class, compute-interference-tolerant |

| MIG via vendor partition | Hardware slice | Hardware-enforced | Strict isolation; A100/H100/B200 only |

3. Workloads — Gang Scheduling grows up

If you covered Gang Scheduling Alpha in the 1.35 deep-dive, the short version of what changed in 1.36 is: the Workload API graduates to Beta, PodGroupSpec gains a priorityClassName, and — the actual headline — KEP-5710 Workload-Aware Preemption lands as Alpha. For the first time, the kube-scheduler treats a PodGroup as a single unit when deciding who to evict to make room. No more zombie pods stranded after partial preemption.

Lab. Fresh single-node kubeadm 1.36.0, GCE e2-standard-4 (4 vCPU), Calico, no GPU.

3.1 Setup gotchas

- Ghost feature gates.

WorkloadSupportandPodGroupPriorityare in the K8s release notes but rejected by v1.36.0 asunrecognized feature gate. The combination that actually works:

--feature-gates=WorkloadAwarePreemption=true,GangScheduling=true,GenericWorkload=true,WorkloadWithJob=true

--runtime-config=scheduling.k8s.io/v1alpha2=true- API at v1alpha2. The Workload/PodGroup types are at

scheduling.k8s.io/v1alpha2, notv1alpha1as 1.35 docs (and the 1.35 ScaleOps blog) reference. Pod→PodGroup linkage is the first-class fieldspec.schedulingGroup.podGroupName— the old annotation+scheduling-gate pattern is gone. - Bogus

PriorityClassNamevalidated at admission.kubectl applyof aPodGroupreferencing a non-existentPriorityClassreturns:

Error from server (Forbidden): podgroups.scheduling.k8s.io "training-bogus"

is forbidden: no PriorityClass with name this-does-not-exist was found3.2 KEP-5710 — preempting a group as a group

Pre-1.36 preemption operates at pod scope. When a higher-priority pod cannot fit, the scheduler picks the smallest set of individual lower-priority pods to evict. That pattern works well for stateless web workloads, but it breaks distributed AI training where the eight ranks of a single job depend on each other and partial scheduling leaves the workload non-functional. The classic failure mode runs as follows: the scheduler preempts one pod of training A to make room for training B, training B still does not fit on the remaining capacity, and the remaining seven pods of training A sit idle holding GPUs because the gang policy refuses to run with seven of eight ranks. Neither workload makes progress.

Workload-Aware Preemption changes the unit of decision. With the gate on, the scheduler treats a PodGroup as one entity — it preempts whole low-priority PodGroups, never partial groups, and only after verifying the high-priority group can actually fit. If the new group cannot be placed even after preemption, no eviction occurs and the existing workloads continue to run undisturbed.

3.3 Demo — group-level preemption

Two PriorityClasses (training-low: 100, training-high: 1000), two PodGroups, identical resource shape. We size each gang at 4 ranks × 600m CPU so it fits on the 4 vCPU node alongside system pods (800m × 4 overcommitted).

apiVersion: scheduling.k8s.io/v1alpha2

kind: PodGroup

metadata: { name: training-a }

spec:

priorityClassName: training-low

disruptionMode: PodGroup

schedulingPolicy:

gang: { minCount: 4 }

---

apiVersion: batch/v1

kind: Job

metadata: { name: training-a }

spec:

parallelism: 4

template:

spec:

schedulingGroup: # the new 1.36 first-class field

podGroupName: training-a

priorityClassName: training-low

restartPolicy: Never

containers:

- { name: trainer, image: busybox:1.36, command: ["sh","-c","sleep 3600"],

resources: { requests: { cpu: 600m, memory: 256Mi },

limits: { cpu: 600m, memory: 256Mi } } }training-b is the same YAML with name: training-b and priorityClassName: training-high. Apply A first, wait for 4/4 Running, submit B:

kubectl get events --sort-by=.lastTimestamp -n default | tail

45s Normal Preempted pod/training-a-s8h5g

Preempted by podgroup bda07530-da19-4aa9-957f-ad8ceb146e2c on node cluster

45s Normal Preempted pod/training-a-c8hqm ...same podgroup UID...

45s Normal Preempted pod/training-a-h7fkt ...same podgroup UID...

45s Normal Preempted pod/training-a-bz5z9 ...same podgroup UID...All four Preempted events at the same relative timestamp — batched group decision, not pod-by-pod. The message names the predator by PodGroup UID, not by individual pod. That’s the API change that tells you you’re on the new code path. training-b ends 4/4 Running on the same node within seconds.

3.5 Native Kubernetes Gang Scheduling vs Kueue, Volcano, and YuniKorn

| Capability | Native (1.36) | Kueue | Volcano | YuniKorn |

| All-or-nothing gang placement | ✅ Beta | ✅ via Workload | ✅ | ✅ |

| Group-level preemption | ✅ Alpha (KEP-5710) | ⚠️ inherited | ✅ | ✅ |

| Delayed preemption | ✅ Alpha | ❌ | ⚠️ partial | ⚠️ partial |

| Hierarchical queues | ❌ | ✅ | ✅ | ✅ |

| Topology-aware (NUMA, NVLink) | ❌ | ⚠️ via plugins | ✅ | ⚠️ |

| Native batch (PyTorchJob, MPIJob, RayJob) | ❌ | ✅ | ✅ | ✅ |

| Best fit | one team, one queue, no plugins | mixed batch + serving on shared GPU | HPC topology | strict multi-tenant quota |

Kubernetes 1.36 makes the in-tree scheduler competent at gang scheduling, which is a meaningful shift from the prior release where the same correctness required a third-party scheduler. It does not make Kubernetes sufficient for clusters where multiple teams contend for a GPU pool or where MPI training depends on NUMA-aware placement, and for those cases Kueue or Volcano continues to be the right layer to add alongside the native primitives.

4. Runtime — Pod-Level Resources Beta

If the 1.35 deep-dive was about container-scope in-place resize finally going GA, 1.36 adds the missing scope: the Pod itself. Pod.spec.resources is now a Beta-default field, alongside container-level spec.containers[*].resources. The two coexist; container-level wins for that container.

Lab. Fresh kubeadm 1.36.0, GCE e2-standard-2 (2 vCPU), Ubuntu 24.04, cgroup v2.

Gotcha — GA gate warnings. InPlacePodVerticalScaling and KubeletPSI are GA in 1.36. Setting them explicitly returns "Warning: setting GA feature gate. It will be removed in a future release." PodLevelResources does not emit that warning — still Beta, default-on but technically toggleable.

4.1 The pod-scope spec.resources field

apiVersion: v1

kind: Pod

metadata: { name: pod-only-resources }

spec:

resources:

requests: { cpu: 500m, memory: 512Mi }

limits: { cpu: 1000m, memory: 1Gi }

containers:

- { name: main, image: busybox:1.36, command: ["sh","-c","sleep 3600"] }

- { name: sidecar, image: busybox:1.36, command: ["sh","-c","sleep 3600"] }Containers inherit; the kubelet writes the limits to the pod-level cgroup slice:

sudo cat .../kubepods-burstable-pod995970c9_..._86a0_..slice/cpu.max

100000 100000 # 100ms quota / 100ms period = 1.0 CPU

sudo cat .../memory.max

1073741824 # 1 GiB exactlyContainer-level fields, when set, shadow (don’t replace) the pod-level for the specific container. Pod-scope only accepts cpu, memory, hugepages-*. Try to declare ephemeral-storage at pod scope:

The Pod "pod-bad-resources" is invalid:

spec.resources.requests[ephemeral-storage]: Unsupported value: "ephemeral-storage":

supported values: "cpu", "hugepages-", "memory"Extended resources (GPUs, custom devices, ephemeral storage) stay container-scope.

4.2 In-place resize meets ResizeDeferred

kubectl --subresource=resize works against spec.resources. Shrink works in place (no restart). Grow on a contended 2 vCPU node:

kubectl get events | grep ResizeDeferred

4m30s Warning ResizeDeferred pod/pod-only-resources

Pod resize OutOfcpu: ...

"error":"Node didn't have enough resource: cpu, requested: 750, used: 1450, capacity: 2000"

ResizeDeferred is the headline new event type. Pre-1.36, a grow without headroom either restarted the pod or silently went Infeasible. Now the pod stays Running at the old size, kubelet emits a structured event explaining exactly what’s missing, and keeps retrying. The new size eventually applies once Cluster Autoscaler or Karpenter frees capacity. .status.allocatedResources continues to reflect what’s actually reserved, so HPA, VPA, and node autoscaling all see the truth.

5. Observability — PSI Metrics go GA

Pressure Stall Information has been Beta in Kubernetes since 1.34, and the PSI research note we published explains why it’s the right leading metric for horizontal pod autoscaling decisions when your pods don’t have CPU limits. In 1.36, KEP-4205 graduates the kubelet PSI integration to GA. The data surface is wider than it was in Beta — two endpoints expose the same kernel signal.

5.1 The cAdvisor Prometheus endpoint

Same path as Beta, fully populated. Counters in seconds; differentiate with rate(...[1m]) and you get the share of the last minute that the cgroup spent waiting on each resource:

container_pressure_cpu_stalled_seconds_total{...,pod="psi-stress"} 0.159446

container_pressure_cpu_waiting_seconds_total{...,pod="psi-stress"} 0.161832

container_pressure_io_stalled_seconds_total{...,pod="psi-stress"} 0

container_pressure_memory_waiting_seconds_total{...,pod="psi-stress"} 05.2 Summary API — the new structured surface

The bigger 1.36 win is on the kubelet Summary API (/stats/summary). PSI is now a first-class object on every container and on the pod aggregate:

{

"podRef": { "name": "psi-stress", "namespace": "default" },

"containers": [{

"name": "stress",

"cpu": {

"usageNanoCores": 200300201,

"psi": {

"full": { "avg10": 70.87, "avg60": 20.71, "avg300": 4.73, "total": 16114670 },

"some": { "avg10": 73.9, "avg60": 21.59, "avg300": 4.93, "total": 16713107 }

}

},

"memory": { "psi": { "full": { "avg10": 0, "..." : "..." }, "some": { "avg10": 0, "..." : "..." } } },

"io": { "psi": { "full": { "avg10": 0, "..." : "..." }, "some": { "avg10": 1.59, "avg60": 0.46, "..." : "..." } } }

}]

}That’s the full PSI tuple — some and full, three rolling averages (10s/60s/5min), cumulative total — as machine-readable JSON. Controllers that consume Summary API (metrics-server, custom HPA adapters, in-cluster autoscalers) get PSI as structured data, not a string-parsed Prometheus dump. cpu.psi.full.avg10 = 70.87 means all tasks in this cgroup were stalled on CPU 70.87% of the last 10 seconds. Unambiguous “this container is starved” signal — and one that distinguishes CFS quota throttling from real CPU starvation.

5.3 The thing that didn’t ship — yet

Honest finding the K8s release notes don’t surface: PSI metrics being GA does not mean PSI-driven node conditions are on. During sustained stress we watched the node:

kubectl describe node | sed -n '/Conditions:/,/Addresses/p'

Conditions:

Type Status Reason Message

NetworkUnavailable False FlannelIsUp Flannel is running

MemoryPressure False KubeletHasSufficientMemory ...

DiskPressure False KubeletHasNoDiskPressure ...

PIDPressure False KubeletHasSufficientPID ...

Ready True KubeletReady ...No CPUPressure or IOPressure node conditions appeared, even under sustained load. The kubelet configuration exposed only evictionPressureTransitionPeriod — no psiCpuThreshold, no psiIoEvictionThreshold, and no other field that would wire PSI signals into the existing eviction manager. The auto-tainting and PSI-driven eviction layer on top of these metrics has not shipped in 1.36, which means the kubelet continues to publish the same node-pressure conditions it always did and continues to evict on memory, disk, and PID pressure but not on the CPU or I/O pressure measured by PSI.

Cluster operators that want to act on PSI in production today implement the control loop themselves — scraping the Summary API or the /metrics/cadvisor endpoint, evaluating thresholds against fields such as cpu.psi.full.avg10 or io.psi.some.avg60, and routing the resulting signal into whichever horizontal pod autoscaling, vertical pod autoscaling, or rightsizing pipeline already runs on the cluster. The metrics surface is production-ready in Kubernetes 1.36; the response pipeline that consumes those metrics remains an external concern for the operator or the policy controller layered above the kubelet.

6. KEP-5304: DRA Device Attributes Downward API in Kubernetes 1.36

KEP-5304 introduces a mechanism by which DRA drivers can hand device metadata directly to a workload by mounting a JSON file at /var/run/dra-device-attributes/<claim>/<request>/<driver>-metadata.json inside the container, removing the need for the sidecar pattern that today reads ResourceSlice.spec.attributes from the Kubernetes API. The use cases this targets include KubeVirt-style workloads that need PCI bus identifiers, SR-IOV network interface cards that need link metadata, and any other workload that currently runs an in-pod controller to extract device attributes from the cluster API.

The feature is Alpha in Kubernetes 1.36 and the NVIDIA DRA driver chart 25.12.0 does not yet implement it on the driver side:

kubectl exec g -- ls /var/run/dra-device-attributes/

ls: cannot access '/var/run/dra-device-attributes': No such file or directoryThe Kubernetes framework is in place — the kubelet code path that mounts the metadata file is present and the alpha API has been merged — but the vendor driver has not yet implemented its side of the contract. Until a future NVIDIA driver release adds the metadata mount, applications that need device attributes continue to read ResourceSlice.spec.attributes from the Kubernetes API through a sidecar.

7. How ScaleOps Extends the 1.36 Primitives

Every section above ends with K8s shipping a primitive — partition advertising, gang correctness, pod-scope resize, leading-indicator pressure metrics — and acknowledging that the policy layer above them is left for someone else to build. That is the seam this article is really about. Kubernetes 1.36 resource management gives you better inputs to existing tools; it does not change the outputs. Cluster Autoscaler, Karpenter, the default scheduler, horizontal and vertical pod autoscaling, KEDA — all stay in place and operate as before, now with richer signals to act on. ScaleOps slots into that same layer, working with those native primitives rather than replacing any of them, and treating each new 1.36 surface as an input to its policy decisions.

| 1.36 feature | What K8s gives you | What ScaleOps adds |

| DRA Partitionable + Consumable + Taints + Health (Beta default-on) | Device plumbing — partition advertising, capacity budgets, taint-driven eviction, health field on Pod status | Automated Fractional GPUs manages fractional GPU allocation on top of the new primitives. GPU Memory Optimization reduces the GPU memory footprint of workloads sharing a partitioned device. Smart Pod Placement schedules based on real-time demand, treating partitioned ResourceClaims as first-class allocation targets.Node Optimization provides context-aware node management so GPU nodes match what the partitions actually request. |

| Workload-Aware Preemption Alpha + Gang Scheduling Beta | PodGroup-aware scheduling and preemption — correctness primitives for AI training | Smart Pod Placement schedules a PodGroup based on real-time demand and treats it as a single placement unit.Karpenter Optimization maximizes Karpenter savings by sizing node pools to the gang shape. Spot Optimization runs more pods on Spot — batch PodGroups can land on spot capacity while latency-sensitive inference replicas stay on on-demand in the same cluster. |

| Pod-Level Resources Beta | Pod-scope CPU/memory/hugepages envelope | Real-Time Pod Rightsizing delivers continuous CPU and memory optimization at pod scope. Java Resource Management optimizes JVM memory so Java services honor a resize. |

| PSI Metrics GA + Summary API | Production-ready leading-indicator metrics for CPU/memory/IO pressure, per container | Replicas Optimization scales ahead of demand, raising replica floors before pressure translates into latency. Real-Time Pod Rightsizing consumes PSI as a context signal for autonomous CPU and memory decisions. |

The pattern is the same across every row: Kubernetes 1.36 resource management ships primitives, ScaleOps runs the policy on top. Roku used the combination of Kubernetes-native primitives plus ScaleOps policy across 110+ Kubernetes clusters and reduced vCPU consumption by 32% at 20% rollout coverage (Roku case study); the 1.36 primitives make that pattern more accurate, not redundant. ScaleOps works with your existing Cluster Autoscaler, Karpenter, default scheduler, horizontal pod autoscaling, and KEDA configuration — no migration, no rearchitecture.

Try ScaleOps free → See where the 1.36 primitives land in your own cluster — partition advertising, PSI signals, pod-scope resources — and where the policy layer above them is still doing nothing.

Book a demo → Walk through how the same defaults-flip features map to your AI training and inference workloads with our team, and see what GPU Optimization, Smart Pod Placement, and Automated Pod Rightsizing change once the new K8s primitives are feeding them.

8. Frequently Asked Questions: Kubernetes 1.36 Resource Management

What changed in Kubernetes 1.36 for resource management?

Six features that were previously opt-in flipped to default-on or graduated in Kubernetes 1.36 resource management. DRA Partitionable Devices, DRA Consumable Capacity, DRA Device Taints/Tolerations, and Resource Health Status all hit Beta default-on. Pod-Level Resources reached Beta default-on. PSI Metrics graduated to GA. Workload-Aware Preemption (KEP-5710) is the one brand-new Alpha worth opting into for AI training.

How are DRA partitionable devices different from device-plugin allocation in Kubernetes 1.36?

The device plugin allocates whole devices in integer counts. Kubernetes 1.36 DRA partitionable devices let the DRA driver expose a single physical device as multiple ResourceSlice entries — one per partition — each independently allocatable. On MIG-class hardware (A100/H100), DRA partitionable devices map to hardware-isolated GPU slices. The scheduler treats each partition like its own device.

What is gang scheduling in Kubernetes for AI training workloads?

Gang scheduling places all pods of a group together or none at all. In Kubernetes 1.36 the Workload API and PodGroup (KEP-4671) made gang scheduling Beta. The scheduler holds pods of a PodGroup until enough are placeable to satisfy gang.minCount, then schedules the PodGroup as a unit. Gang scheduling eliminates the “1 of 8 ranks scheduled, 7 idle” failure mode that destroys distributed training jobs.

Kueue vs Volcano vs YuniKorn — which is better for Kubernetes gang scheduling AI training?

Native gang scheduling (Kubernetes 1.36) covers correctness for one team running training. Kueue adds hierarchical queues, fair-share, clean integration with PyTorchJob/MPIJob/RayJob. Volcano covers HPC topology constraints (NVLink, NUMA). YuniKorn offers strict multi-tenant quota hierarchies. See §3.5 for the full comparison table.

When should I use pod-level resources in Kubernetes 1.36?

Use pod-level resources Kubernetes 1.36 when you have a multi-container Pod and you want to express the envelope once instead of repeating the same numbers on every container. The Pod.spec.resources field accepts cpu, memory, and hugepages-* only — extended resources stay container-scope. Container-level fields, when set, override pod-level resources for that container.

How does workload-aware preemption Kubernetes scheduling improve GPU utilization?

Without workload-aware preemption Kubernetes scheduling, the scheduler may place 3-of-4 ranks of training job A and leave the 4th pending forever because no node has capacity. The 3 placed pods sit idle holding GPUs because the gang policy refuses to start. With workload-aware preemption, those 3 pods never get placed; the GPUs they would have held stay free for any workload that fits.

How do Kubernetes PSI metrics GA help detect resource pressure?

Kubernetes PSI metrics GA (KEP-4205, generally available in 1.36) measure how much time tasks spent waiting for CPU, memory, or I/O — independent of CPU limits or throttling. PSI metrics catch contention and starvation that CPU% misses. In Kubernetes 1.36 the PSI metrics are exposed via /metrics/cadvisor and /stats/summary, with container_pressure_cpu_*, container_pressure_memory_*, and container_pressure_io_* per container.

How do I check Kubernetes resource health status in a 1.36 cluster?

For Kubernetes resource health status, run kubectl get pod <pod> -o jsonpath='{.status.containerStatuses[*].allocatedResourcesStatus}'. The field reports per-device health (Healthy, Unknown, Unhealthy) for DRA-managed devices. For traditional resource usage, kubectl describe pod <pod> shows requests and limits; kubectl top pod shows live usage. For PSI metrics use kubectl get --raw /api/v1/nodes/<node>/proxy/stats/summary | jq '.pods[].containers[].cpu.psi'.

What does 1000m CPU mean in Kubernetes 1.36 resource settings?

1000m means 1000 millicores, equal to 1 full CPU core (or 1 vCPU on a cloud VM). 250m equals a quarter core; 1500m equals 1.5 cores. Memory uses different suffixes: Mi (mebibytes, 1024-based) and Gi, or M/G (1000-based). Resource unit semantics did not change in Kubernetes 1.36 — millicores and memory units remain cgroup primitives.

Why is my DRA Device Taint missing from kubectl api-resources?

The kube-apiserver is missing --runtime-config=resource.k8s.io/v1alpha3=true. Even though DeviceTaintRule is Beta in Kubernetes 1.36, the DeviceTaintRule type lives at resource.k8s.io/v1alpha3. The feature gate (DRADeviceTaints=true) alone does not register the DeviceTaintRule API — the runtime-config flag does.

9. KEPs and Sources for Kubernetes 1.36 Resource Management

KEPs covered:

| KEP | Time | Status in 1.36 |

| KEP-4205 | Support PSI based on cgroupv2 | GA |

| KEP-4671 | Gang Scheduling using Workload Object | Beta |

| KEP-4680 | Resource Health Status on Pod Status | Beta default-on |

| KEP-4815 | DRA Partitionable Devices | Beta default-on |

| KEP-4817 | DRA ResourceClaim Status (device data) | Beta default-on |

| KEP-5055 | DRA Device Taints and Tolerations | Beta default-on |

| KEP-5304 | DRA Device Attributes Downward API | Alpha |

| KEP-5710 | Workload-Aware Preemption | Alpha |